Ananace

Just another Swedish programming sysadmin person.

Coffee is always the answer.

And beware my spaghet.

- 28 Posts

- 97 Comments

44·3 days ago

44·3 days agoThe predictable interface naming has solved a few issues at work, mainly in regards to when we have to work with expensive piece-of-shit (enterprise) systems, since they sometimes explode if your server changes interface names.

Normally wouldn’t be an issue, but a bunch of our hardware - multiple vendors and all - initialize the onboard NIC pretty late, which causes them to switch position almost every other boot.I’ve personally stopped caring about interface names nowadays though, I just use automation to shove NetworkManager onto the machine and use it to get a properly managed connection instead, so it can deal with all the stupid things that the hardware does.

6·22 days ago

6·22 days agoGoing to be really amazing to play Factorio again without knowing how to solve everything.

1·1 month ago

1·1 month agoThe EU AI act classifies AI based on risk (in case of mistakes etc), and things like criminality assessment is classed as an unacceptable risk, and is therefore prohibited without exception.

There’s a great high level summary available for the act, if you don’t want to read the hundreds of pages of text.

2·1 month ago

2·1 month agoThey couldn’t possibly do that, the EU has banned it after all.

84·1 month ago

84·1 month agoTo quote Microsoft themselves on the feature;

“No content moderation” is the most important part here, it will happily steal any and all corporate secrets it can see, since Microsoft haven’t given it a way not to.

Well, one part of it is that Flatpak pulls data over the network, and sometimes data sent over a network doesn’t arrive in the exact same shape as when it left the original system, which results in that same data being sent in multiple copies - until one manages to arrive correctly.

We’re mirroring the images internally, not just because their mirrors suck and would almost double the total install time when using them, but also because they only host the images for the very latest patch version - and they’ve multiple times made major version changes which have broken the installer between patches in 22.04 alone.

What is truly bloated is their network-install images, starting with a 14MB kernel and 65MB initrd, which then proceeds to pull a 2.5GB image which they unpack into RAM to run the install.

This is especially egregious when running thin VMs for lots of things, since you now require them to have at least 4GB of RAM simply to be able to launch the installer at all.

Compare this to regular Debian, which uses an 8MB kernel and a 40MB initrd for the entire installer.

Or some larger like AlmaLinux, which has a 13MB kernel and a 98MB initrd, and which also pulls a 900MB image for the installer. (Which does mean a 2GB RAM minimum, but is still almost a third of the size of Ubuntu)

35·2 months ago

35·2 months agoIf you’re going to post release notes for random selfhostable projects on GitHub, could you at least add the GitHub About text for the project - or the synopsis from the readme - into the post.

I’ve been looking at the rewrite of Owncloud, but unfortunately I really do need either SMB or SFTP for one of the most critical storage mounts in my setup.

I don’t particularly feel like giving Owncloud a win either, they’ve not been behaving in a particularly friendly manner for the community, and their track record with open core isn’t particularly good, so I really don’t want to end up with a decent product that then steadily mutilates itself to try and squeeze money out of me.The Owncloud team actually had a stand at FOSDEM a couple of years back, right across from the Nextcloud team, and they really didn’t give me much confidence in the project after chatting with them. I’ve since heard that they’re apparently not going to be allowed to return again either, due to how poorly they handled it.

I’ve been hoping to find a non-PHP alternative to Nextcloud for a while, but unfortunately I’ve yet to find one which supports my base requirements for the file storage.

Due to some quirks with my setup, my backing storage consists of a mix of local folders, S3 buckets, SMB/SFTP mounts (with user credential login), and even an external WebDav server.

Nextcloud does manage such a thing phenomenally, while all the alternatives I’ve tested (including a Radicale backed by rclone mounts) tend to fall completely to pieces as soon as more than one storage backend ends up getting involved, especially when some of said backends need to be accessed with user-specific credentials.

Well, things like the fact that snap is supposed to be a distro-agnostic packaging method despite being only truly supported on Ubuntu is annoying. The fact that its locked to the Canonical store is annoying. The fact that it requires a system daemon to function is annoying.

My main gripes with it stem from my job though, since at the university where I work snap has been an absolute travesty;

It overflows the mount table on multi-user systems.

It slows down startup a ridiculous amount even if barely any snaps are installed.

It can’t run user applications if your home drive is mounted over NFS with safe mount options.

It has no way to disable automatic updates during change critical times - like exams.There’s plenty more issues we’ve had with it, but those are the main ones that keep causing us issues.

Notably Flatpak doesn’t have any of the listed issues, and it also supports both shared installations as well as internal repos, where we can put licensed or bulky software for courses - something which snap can’t support due to the centralized store design.

Especially if you - like Microsoft - consider “Unicode” to mean UTF-16 (or UCS-2) with a BOM.

Do you have WebP support disabled in your browser?

(Wasn’t aware my pict-rs was set to transcode to it, going to have to fix that)

I’m currently sitting with an Aura 15 Gen 2, and I’m definitely happy with it.

I do wish they’d get their firmware onto LVFS, but that’s about my main complaint.

Been using the KeyDB fork for ages anyway, mainly because it supports running in a multi-master / active-active setup, so it scales and clusters without the ridiculousness that is HA Redis.

5·4 months ago

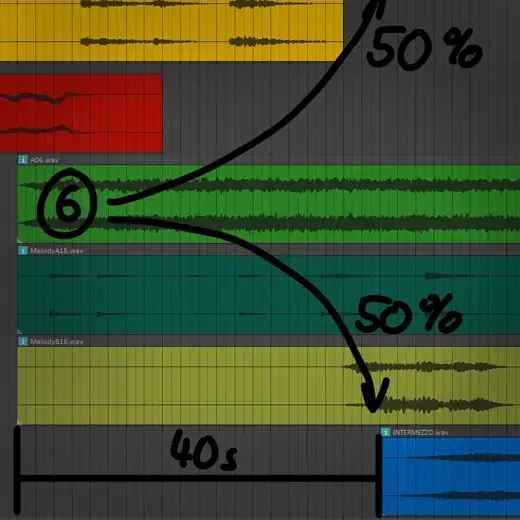

5·4 months agoI feel like this could go really well together with Piet.

Just imagine; an album consisting of a bunch of Velato programs with Piet code as the artwork.

7·4 months ago

7·4 months agoThe first official implementation of directly connecting WhatsApp to another chat system - using APIs built specifically for purpose instead of third-party bridges - was indeed done against the Matrix protocol, as part of a collaboration in testing ways to satisfy the interoperability requirements of the EU Digital Services Act.

So not a case of a third-party bridge trying to act as a WhatsApp client enough to funnel communication, but instead using an official WhatsApp endpoint developed - by them - explicitly for interoperation with another chat system.I think the latest update on the topic is the FOSDEM talk that Matthew held this February.

Edit: It’s worth noting that the goal here is to even support direct E2EE communication between users of WhatsApp and Matrix, something that’s not likely to happen with the first consumer-available release.

81·4 months ago

81·4 months agoWell, the first tests for interconnected communication with WhatsApp were done with Matrix, so that’s a safe bet.

You’re lucky to not have to deal with some of this hardware then, because it really feels like there are manufacturers who are determined to rediscover as many solved problems as they possibly can.

Got to spend way too much time last year with a certain piece of HPC hardware that can sometimes finish booting, and then sit idle at the login prompt for almost half a minute before the onboard NIC finally decides to appear on the PCI bus.

The most ‘amusing’ part is that it does have the onboard NIC functional during boot, since it’s a netbooted system. It just seems to go into some kind of hard reset when handing over to the OS.

Of course, that’s really nothing compared to a couple of multi-socket storage servers we have, which sometime drop half the PCI bus on the floor when under certain kinds of load, requiring them to be unplugged from power entirely before the bus can be used again.